FUJITSU:Unresolve Challenges in SC FTQC

Yoshiyasu Doi, Senior Research Director

Fujitsu Research Quantum Lab

2026.04.02FUJITSU is a Japanese manufacturer and developer of full-stack quantum computers. It collaborates closely with the RIKEN Center for Quantum Computing in Japan, with the goal of developing a fault-tolerant quantum computer (FTQC) designed and built domestically in Japan. This talk mainly introduced and assessed the challenges that must be addressed on the path toward the practical realization of large-scale FTQC.

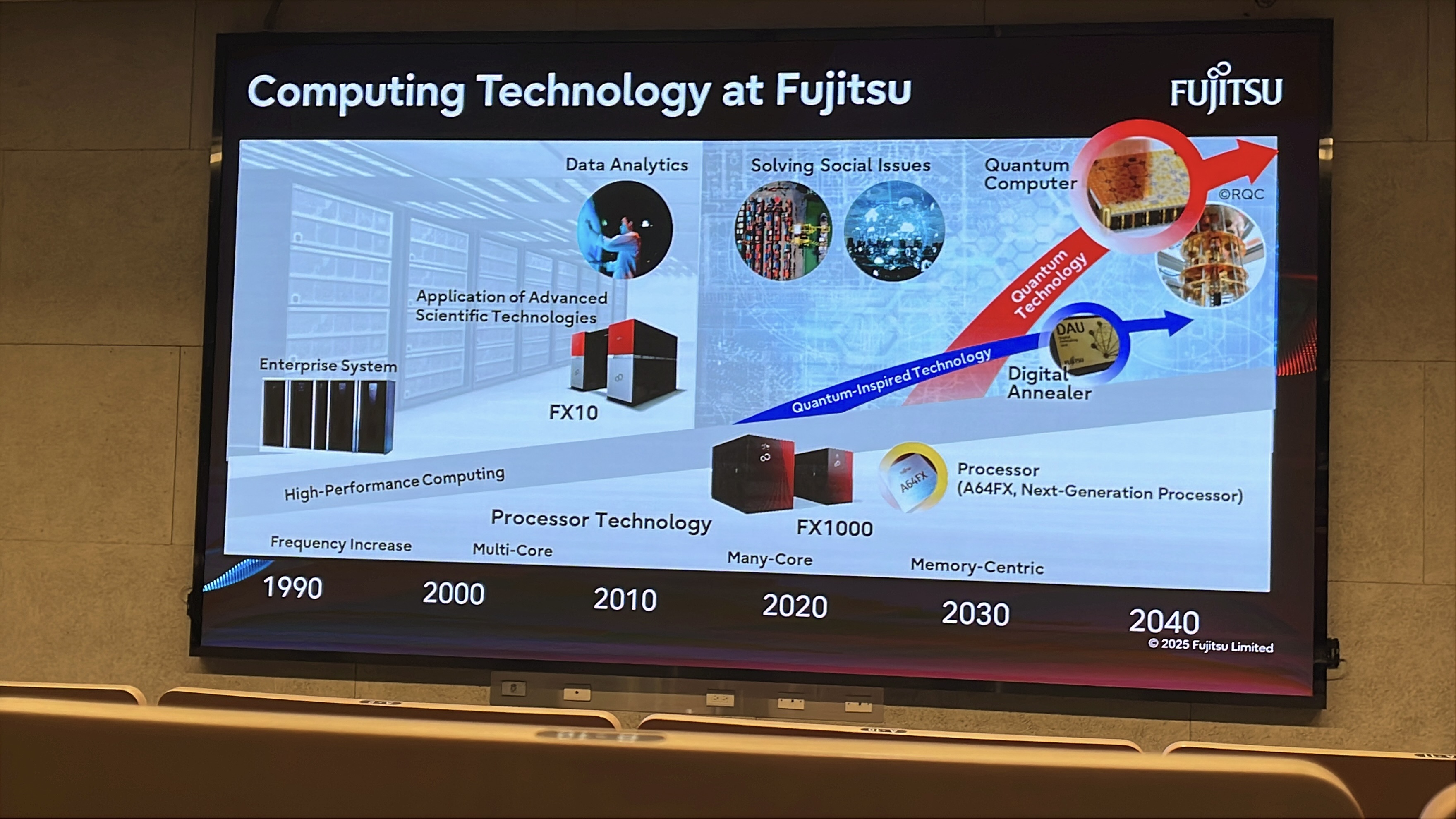

▲ FUJITSU has enterprise-level data center and supercomputing center deployment solutions in Japan. Interestingly, the slide below mentions the ways FUJITSU has improved computational power at different stages:

Higher clock frequency: through high-frequency CPU operation

Multi-core computing: multi-core CPUs

Multi-cluster computing: high-speed interconnection across clusters

In-memory / near-memory computing: overcoming the memory bandwidth wall and attempting to reduce latency caused by data exchange between memory and computing cores.

In addition, FUJITSU is also investing in the computational development of next-generation quantum technologies and quantum-inspired technologies.

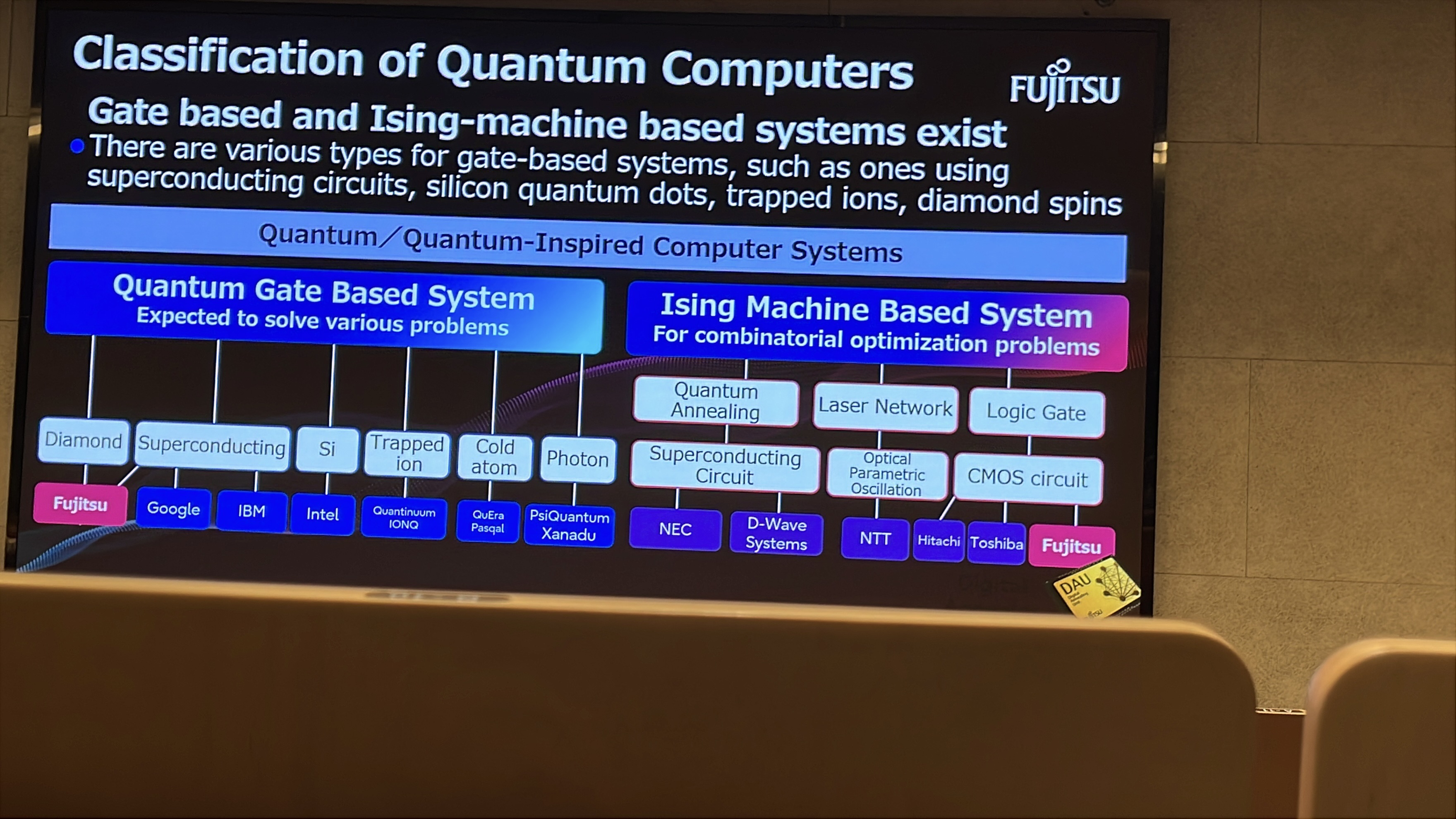

Types of Quantum Computers

▲ Quantum computers can generally be divided into two major categories: gate-based quantum computing and adiabatic quantum computing (annealing). In computational science, these two paradigms are computationally equivalent and can simulate one another, but the computational overhead differs depending on the problem.

Gate-based quantum computing is easier to compare with conventional computing, while adiabatic quantum computing is well suited for optimal parameter analysis (that is, ground-state search). FUJITSU is investing in the development of multiple such technologies.

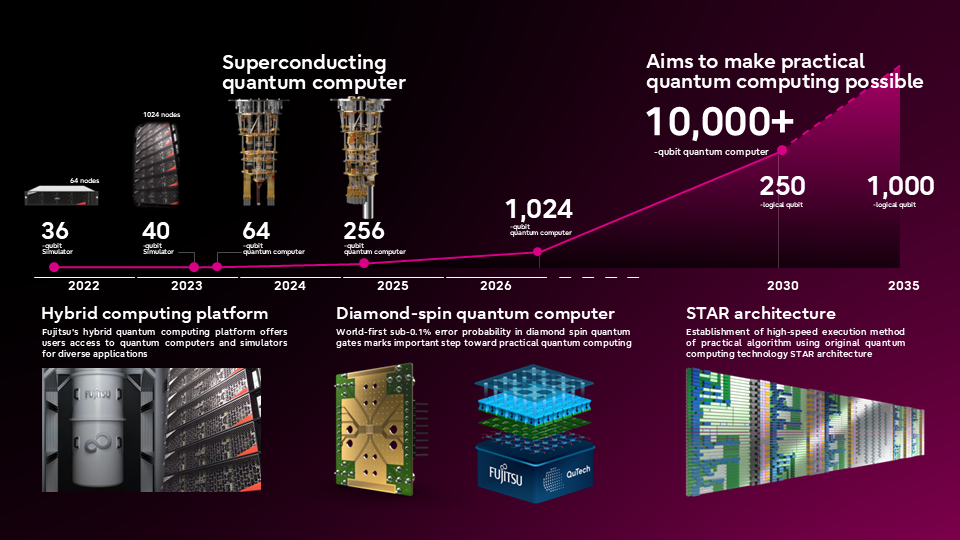

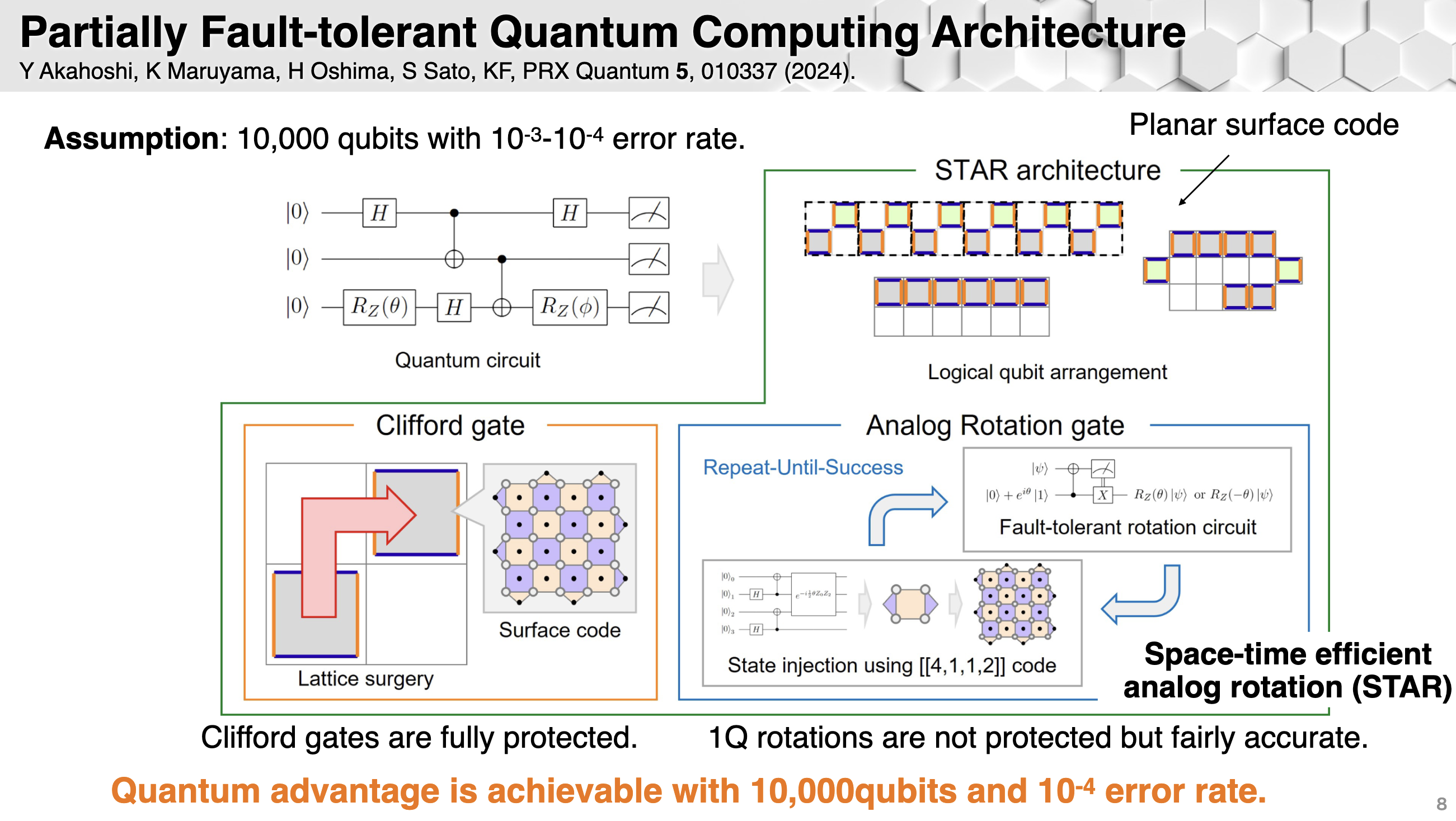

▲ FUJITSU's quantum computer roadmap. FUJITSU's research includes superconducting quantum computers and diamond spin quantum computers. The "STAR architecture" is a quantum computing architecture based on phase rotation gates.

Ref: https://global.fujitsu/en-global/technology/research/quantum

Ref: https://global.fujitsu/-/media/Project/Fujitsu/Fujitsu-HQ/technology/research/quantum/event-202503/FQD2025japan_guest_talk3.pdf

FUJITSU and RIKEN Multi-Qubit System Development

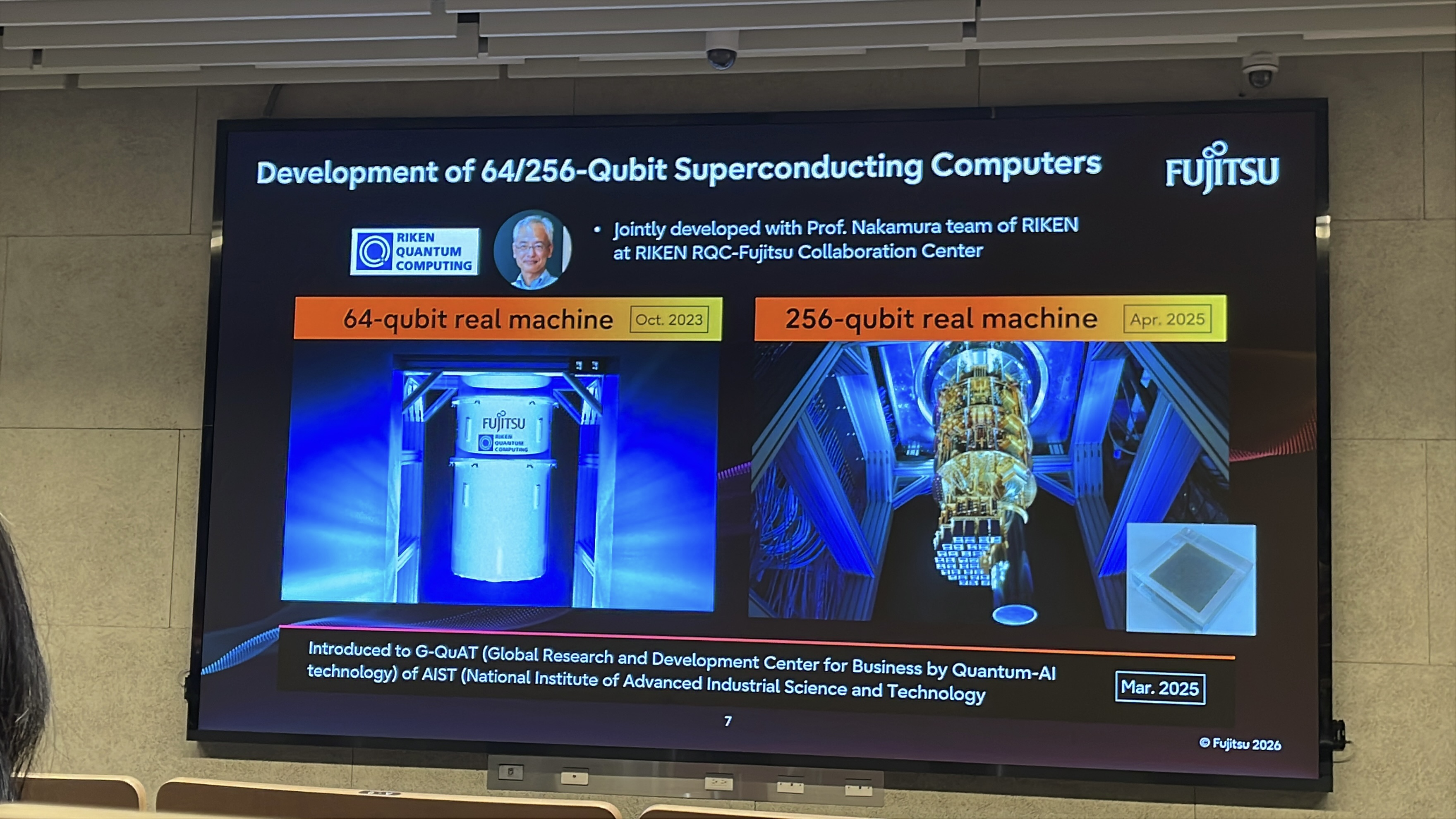

▲ FUJITSU is collaborating with Yasunobu Nakamura, the leader of the RIKEN quantum computing center, to develop multi-qubit systems.

Professor Nakamura, Distinguished Research Fellow Chi-Tung Chen, and Academician Tsai Chao-Sen of the Research Center for Critical Issues at Academia Sinica in Taiwan collaborated many times in the early days to develop the feasibility of qubits based on Josephson junctions, including the Cooper Pair Box and Flux Qubit. These were predecessors of the modern transmon and laid much of the foundation for the field.

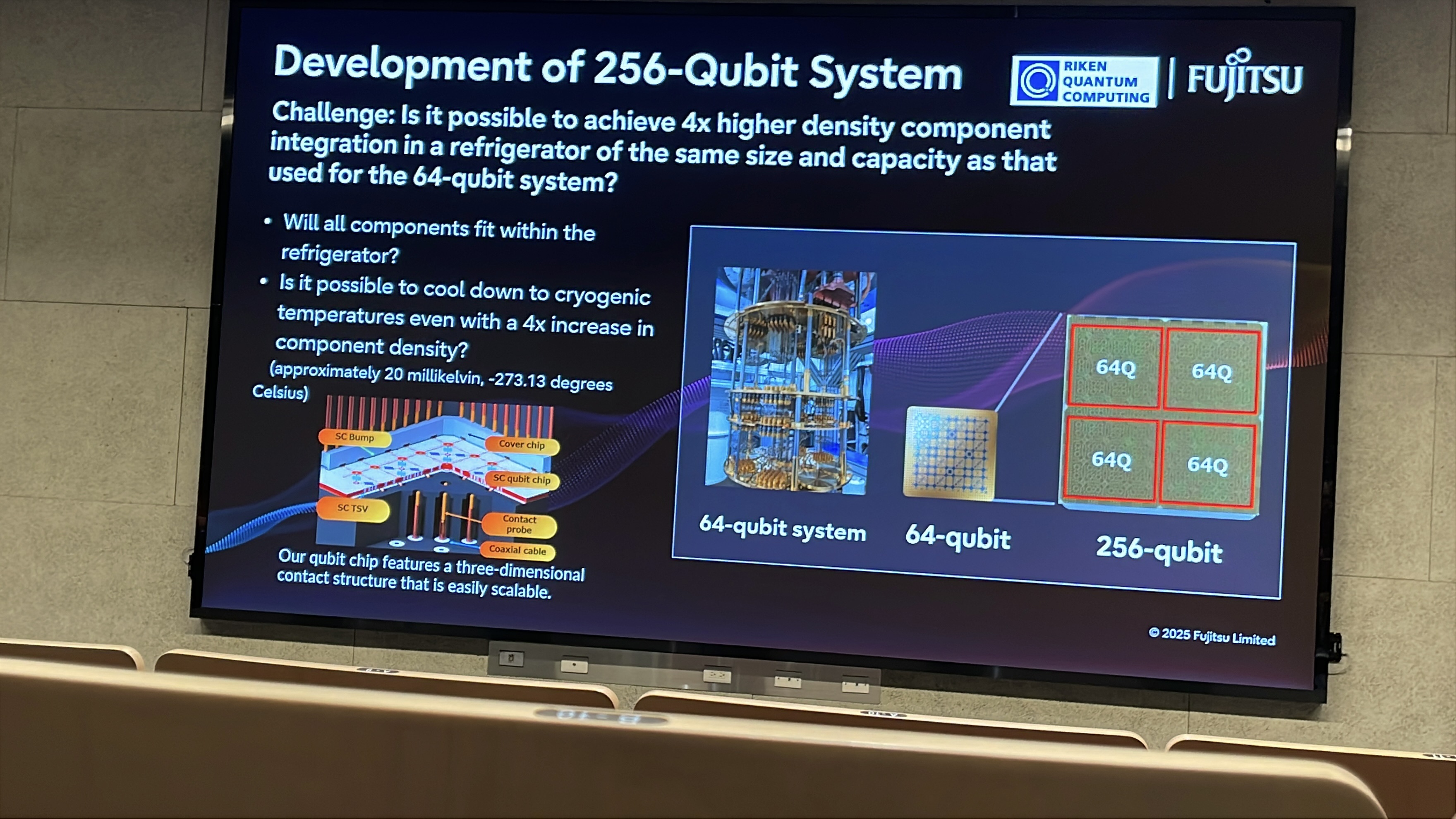

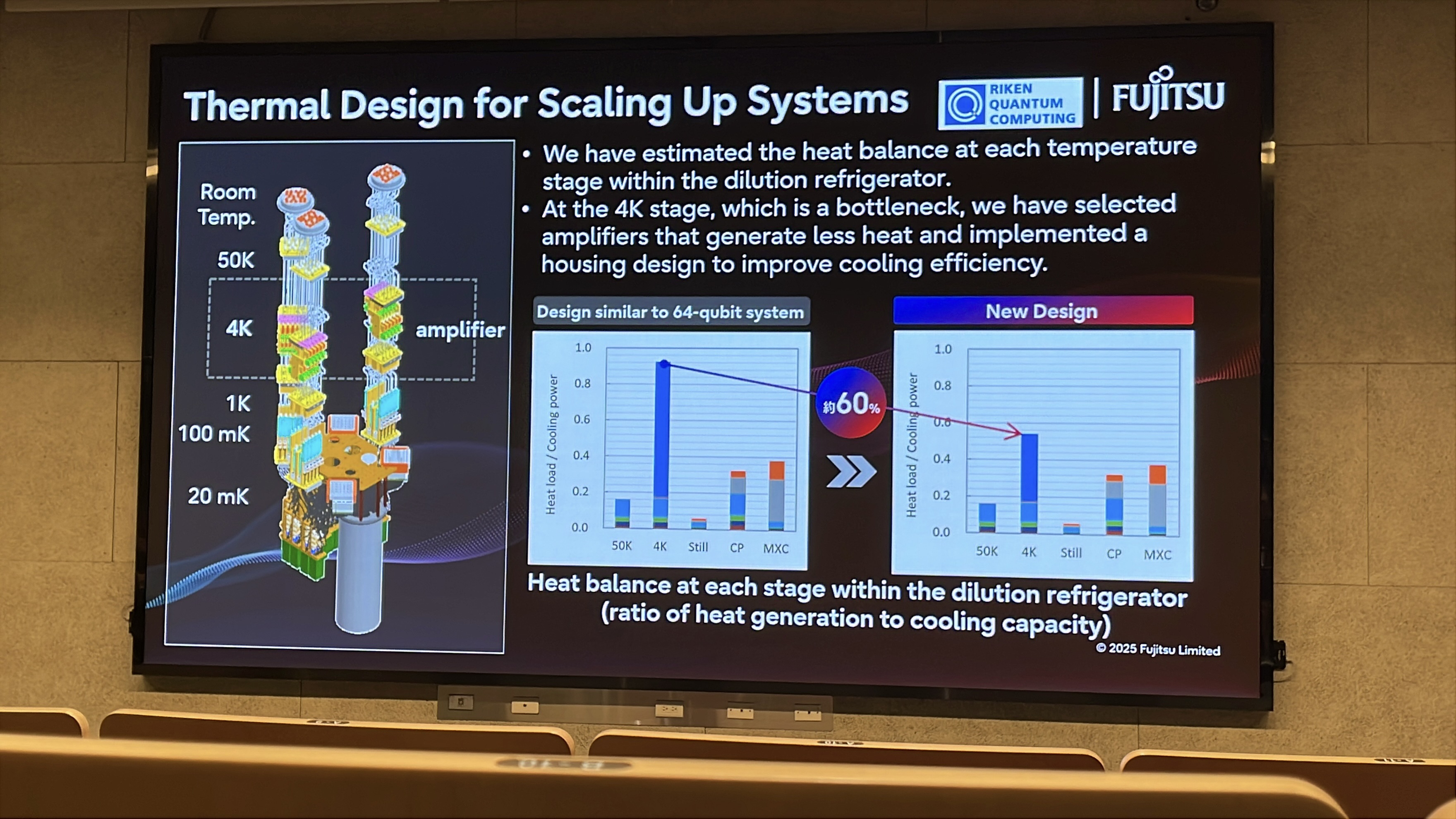

▲ FUJITSU and RIKEN are jointly developing a 64-qubit system and hope to combine modules into a 256-qubit QPU. Key scaling research topics include how to reuse the existing 64-qubit hardware design results, improve device density, and at the same time avoid excessive increases in thermal load.

Large-Area Superconducting Circuit Panels and Qubit Variability

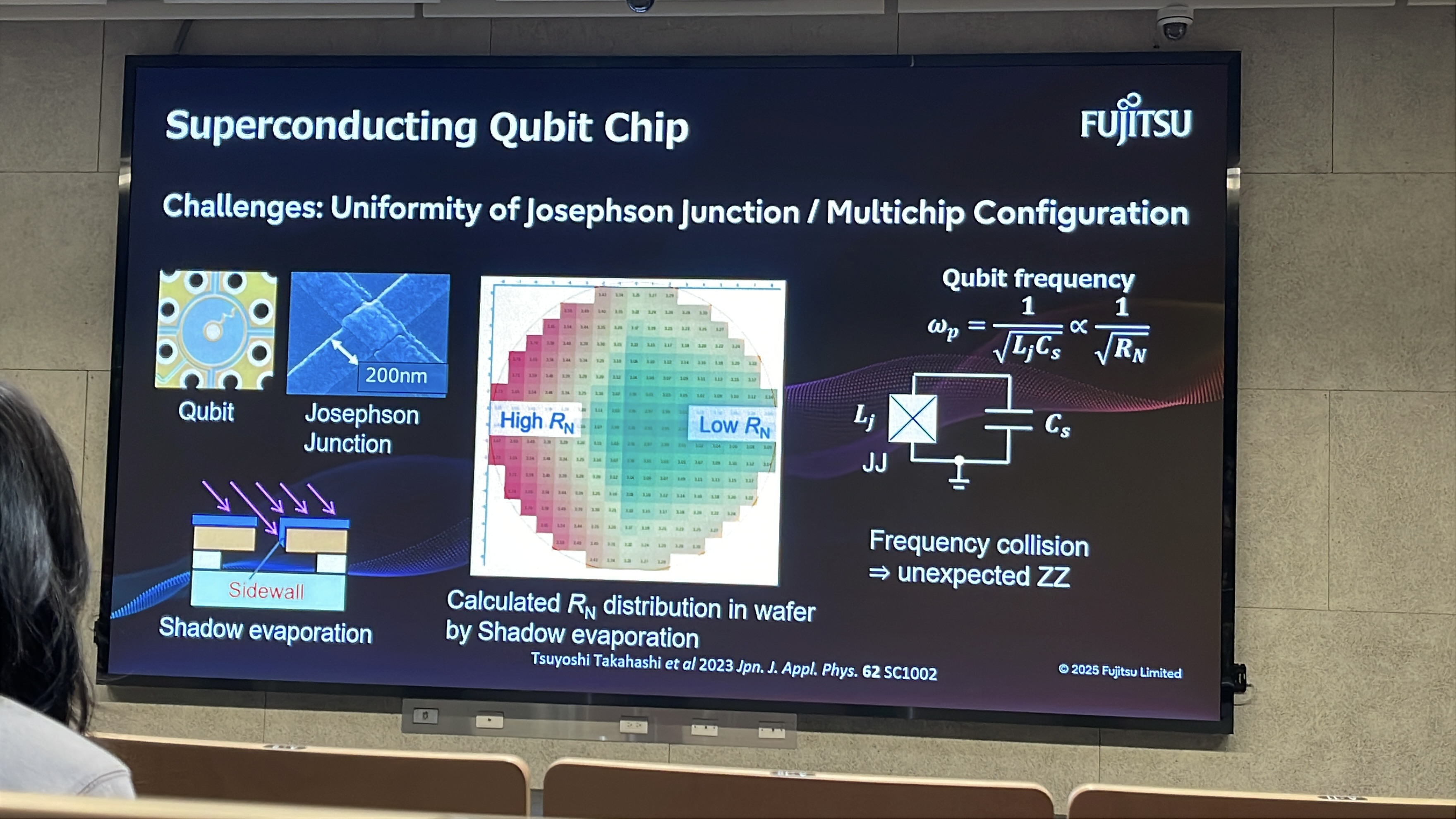

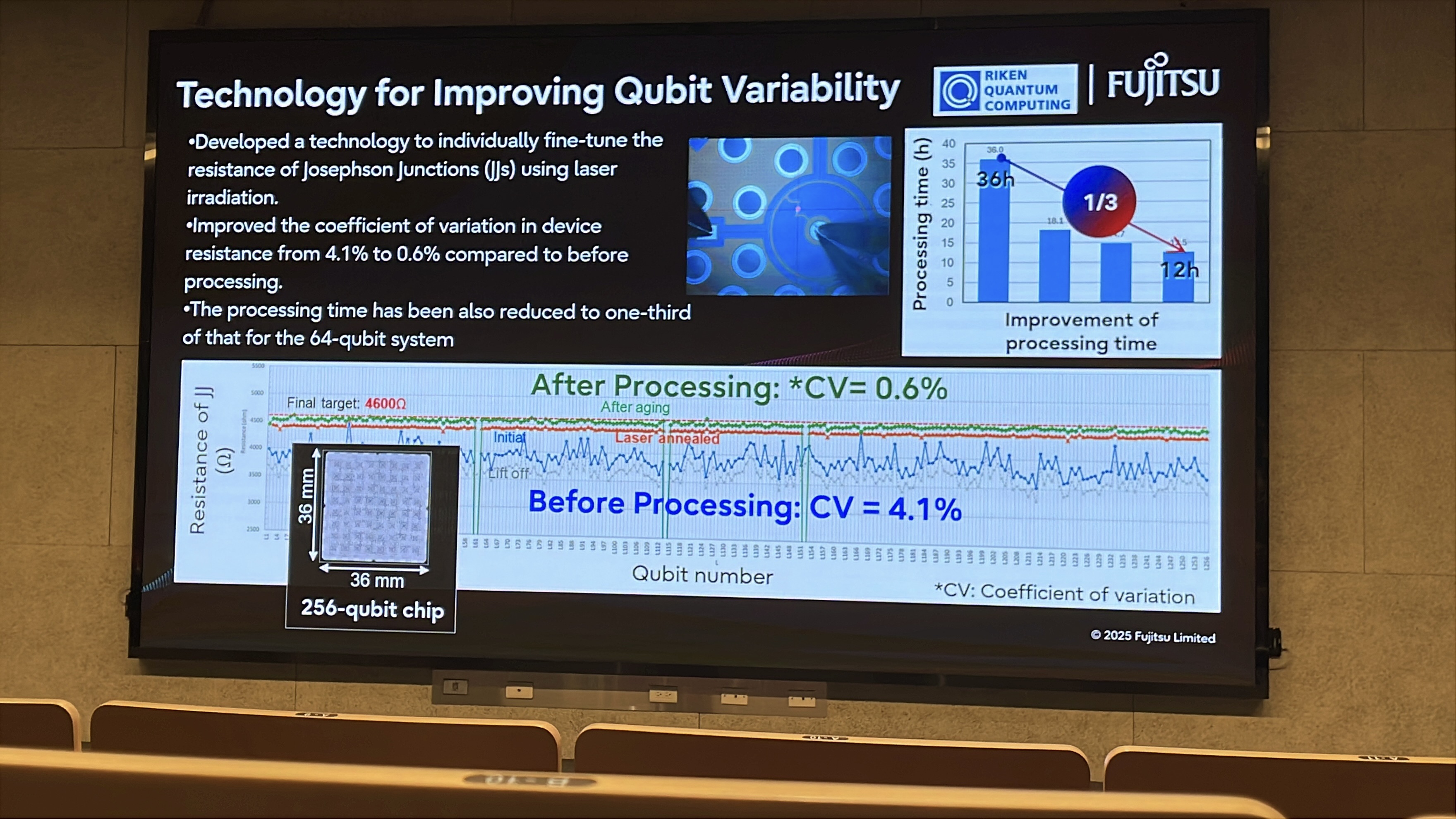

The fabrication of multi-qubit chips is in fact similar to the semiconductor wafer industry: a large number of quantum circuits are integrated on large-area wafers. The related issues of mass production therefore involve the uniformity of qubits across the wafer. Because quantum response is extremely sensitive and Josephson junctions require very high process precision, the properties of superconducting qubits distributed across a wafer do in practice vary to some extent. Post-processing can be used to tune the qubits on the wafer toward more similar characteristics.

▲ This figure shows that when Josephson junctions are fabricated on a wafer, qubits can exhibit different resistance values because of differences in the quality of the oxide / insulating layer in the Josephson junctions. If qubit characteristics cannot be precisely controlled, unexpected crosstalk (ZZ crosstalk) can arise on the QPU.

▲ RIKEN improves qubit uniformity by developing a laser-heating post-processing method. Probes are used to measure qubits one by one, and laser heating is applied to tune the properties of the Josephson junctions.

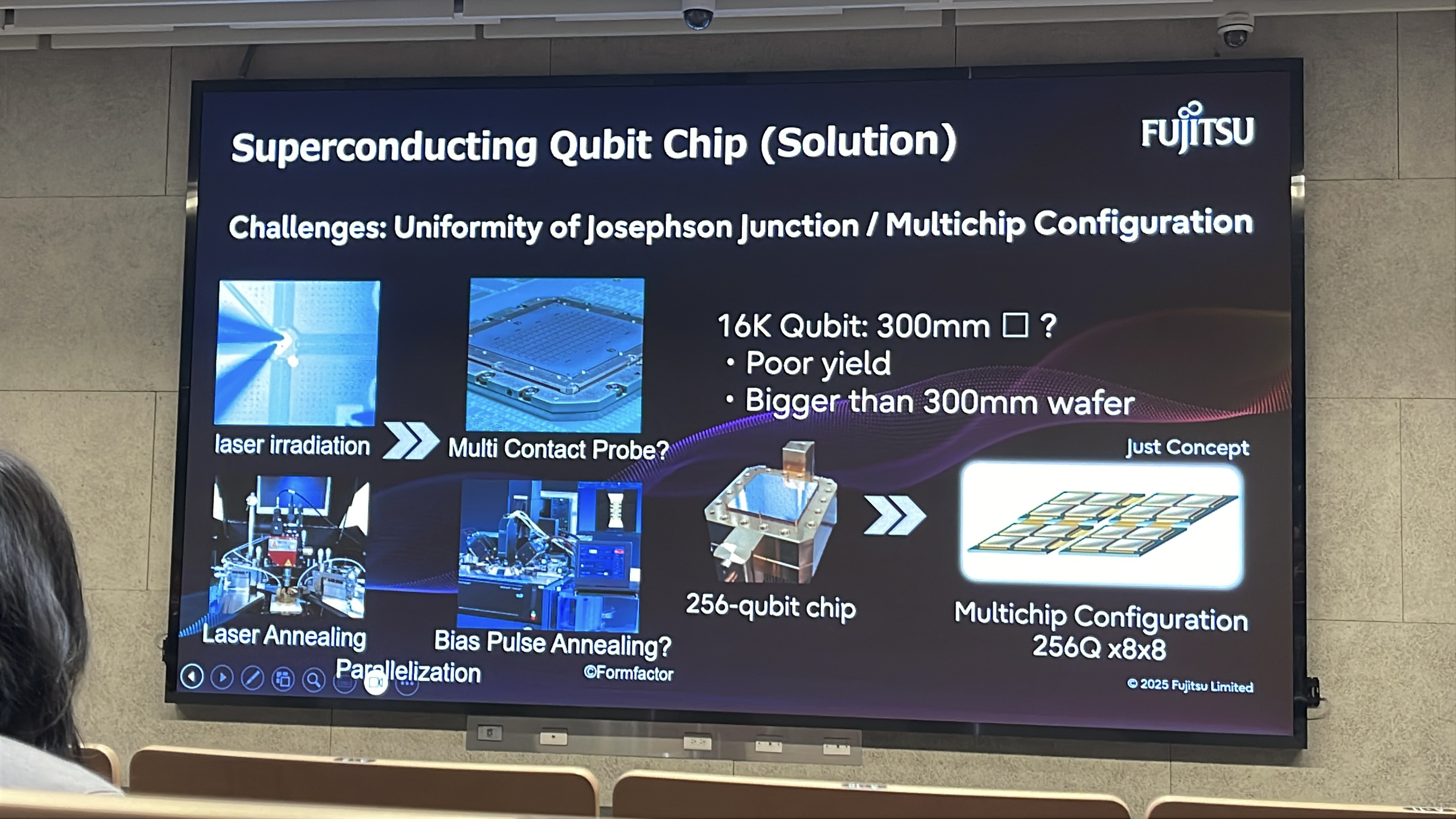

▲ However, when moving toward larger numbers of qubits, measuring each qubit individually and aligning a laser for local heating may not be a method that scales rapidly.

The next generation of post-processing aims to integrate the existing wiring on the quantum circuit itself and directly apply bias voltage pulses to achieve homogenization.

Infrastructure Scale of a Quantum Computing Center

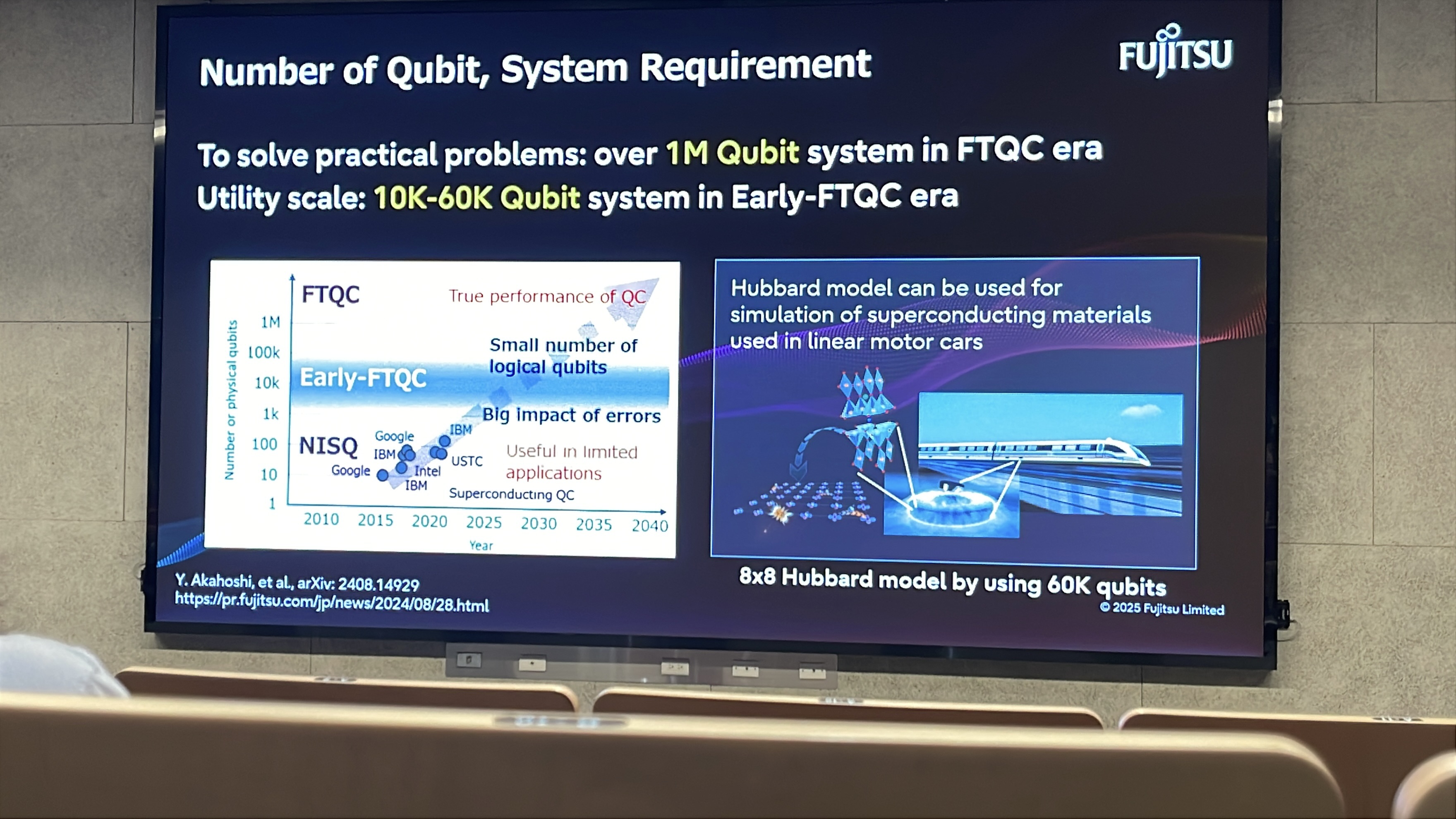

To move toward “practical” quantum advantage, FUJITSU’s development roadmap argues that a quantum computer composed of on the order of one million (~1M) physical qubits will be required. Because of the demands of quantum error correction, a “practical” logical qubit is currently estimated to require roughly a thousand physical qubits working together. To clarify, in experiments and in early demonstrations of quantum advantage, the tolerance for error rates is relatively high. A logical qubit with error-correction capability may involve around 100 physical qubits, so chips with 1,000 to 5,000 physical qubits are currently the targets pursued by quantum researchers and companies. With such 1,000 to 5,000 physical qubits—equivalent to roughly 20 to 50 logical qubits in computational capability—researchers aim to demonstrate quantum advantage. For more practical, large-scale quantum supercomputing, however, one needs both a large number of qubits operating in parallel (that is, more logical qubits) and deeper logical circuit depth (that is, lower error rates or stronger error correction), which often causes the number of required physical qubits to grow quadratically. Yet larger numbers of qubits also create more complicated crosstalk, which in turn increases error rates and demands even more resources for error correction. This is why practical quantum computing is currently estimated to require an integrated system with around one million qubits in order to deliver useful quantum advantage. The integration of control instruments and high-power ultralow-temperature cooling for one million qubits would exceed the electricity consumption of today’s AI data centers and place a heavy burden on power infrastructure.

▲ FUJITSU explains the number of qubits required for Early-FTQC and full FTQC.

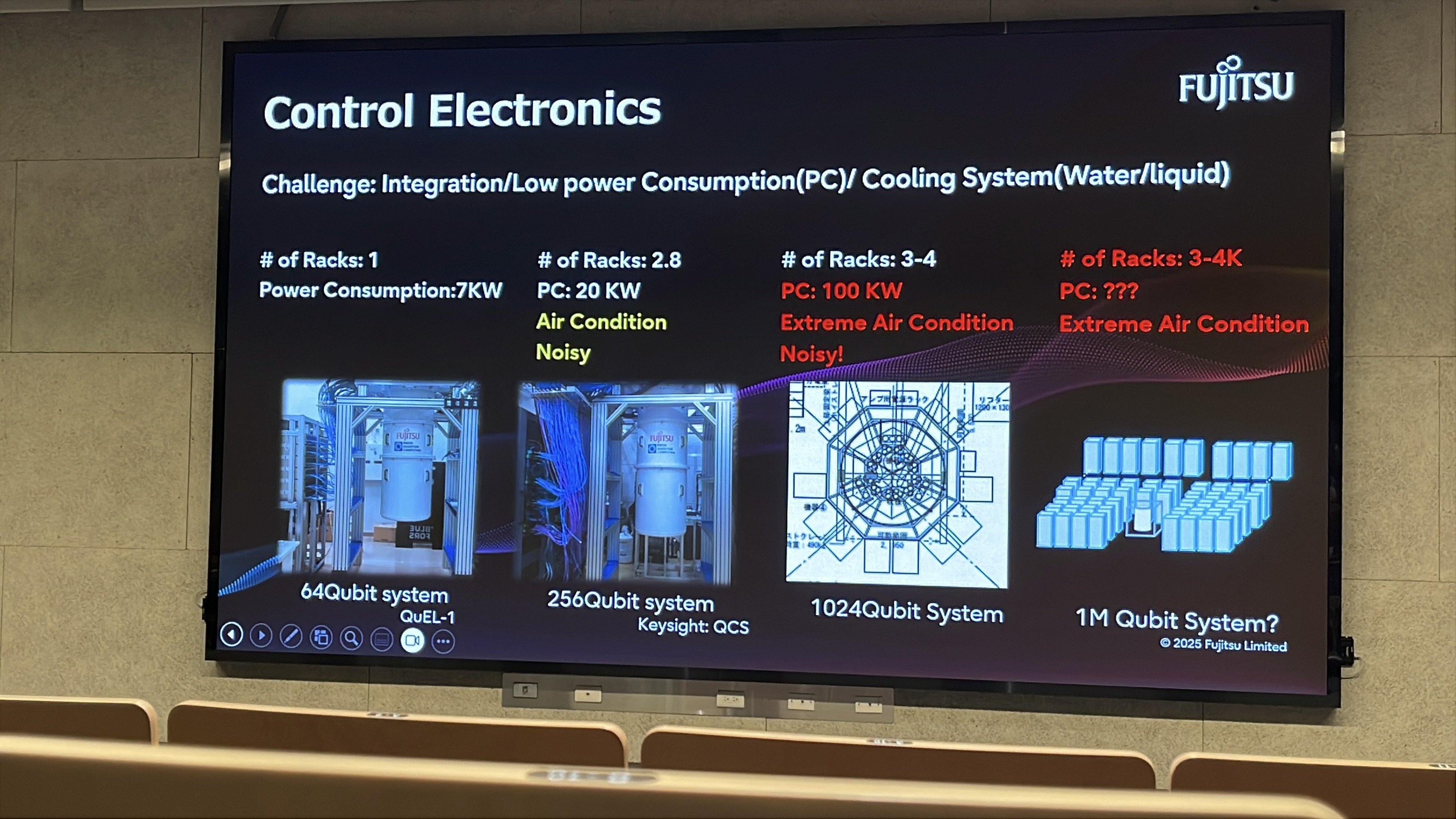

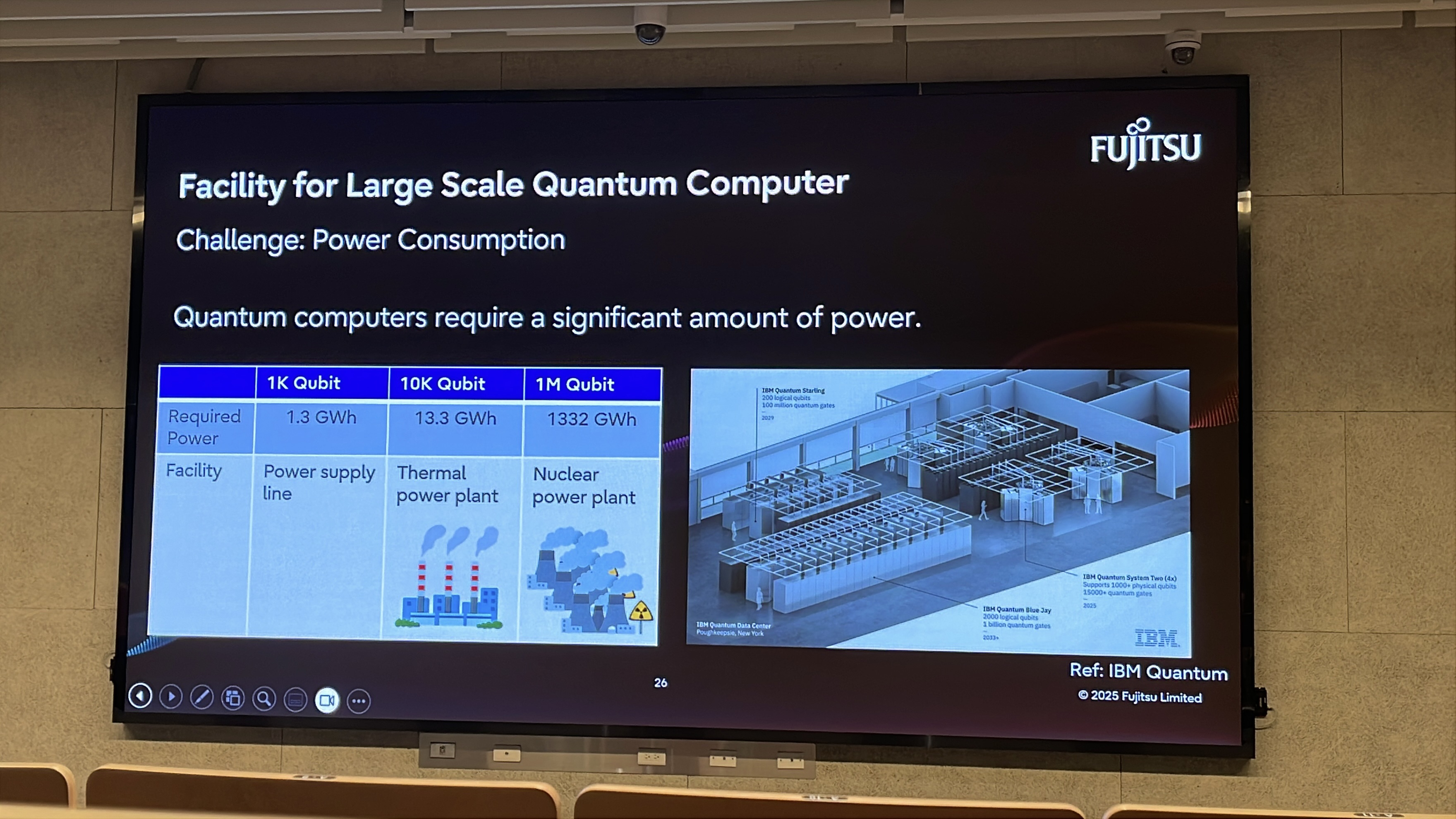

▲ FUJITSU estimates the cooling and power-consumption issues brought by a scaled quantum supercomputing center. On one hand, cooling for ultra-large data centers generates substantial noise, which can interfere with qubits and increase error rates.

On the other hand, the power demand of a quantum supercomputing center is roughly estimated to reach the TW3 level, corresponding to more than 500 nuclear power plants in installed capacity.

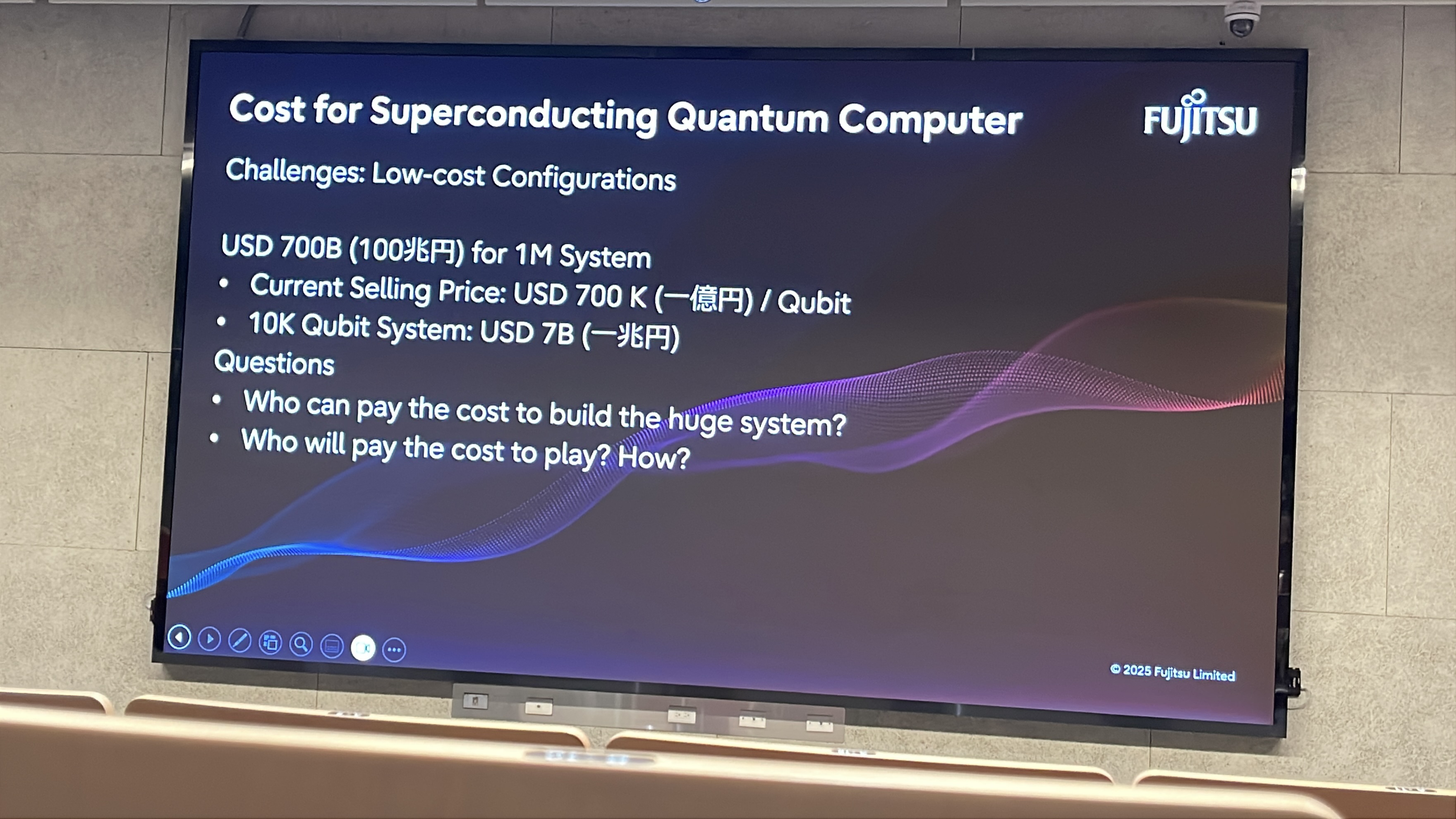

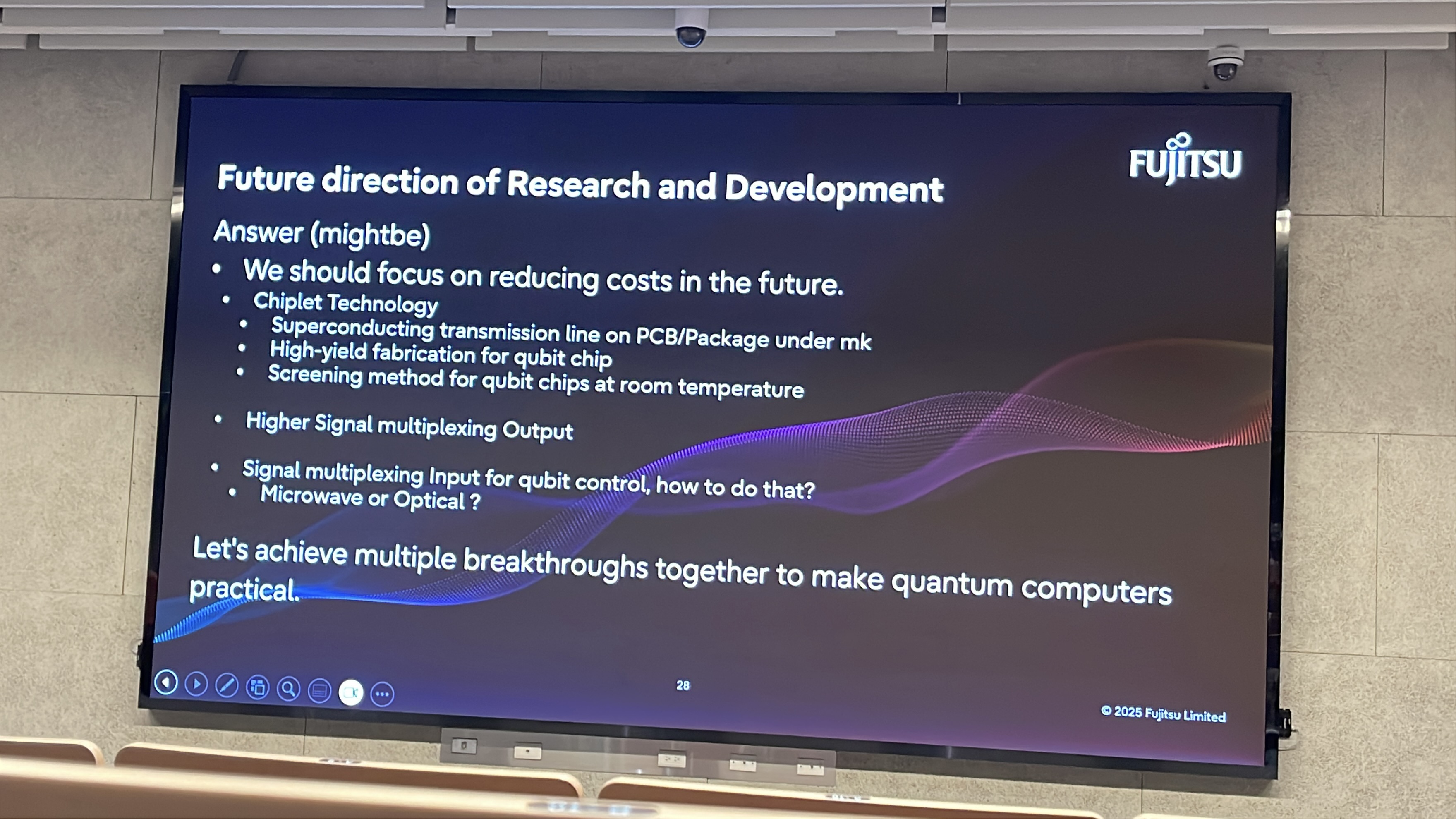

▲ Because the estimated scale of such a quantum supercomputing center is enormous, FUJITSU raises several open questions: how can the relevant infrastructure be achieved, who will help enable it, and how can the associated costs be reduced to make it more feasible?

Originally written in Chinese by the author, these articles are translated into English to invite cross-language resonance.

Peir-Ru Wang

Peir-Ru Wang